Prism Deep Pixinsight Windows/MacOs

– Optimized app size.

– Improved support for multiple GPUs on Windows platforms.

– Improved inference speed on MacOS Silicon.

Prism Deep – PixinSight Version

Important change for Windows users (PixInsight / PI builds using GPU acceleration)

Removed DirectML inference due to incorrect model inference results.

The application now enforces strict CUDA-based inference for GPU acceleration.

NVIDIA GPUs: continue to benefit from hardware acceleration (CUDA) after updating.

AMD GPUs: hardware acceleration is currently unavailable; inference will run on the CPU instead.

Reason for the change

DirectML was originally introduced to support a wider range of GPUs.

However, testing revealed that DirectML produced incorrect inference outputs in some cases.

Ensuring correct and reliable results has been prioritized over broader GPU compatibility.

Impact

Users with AMD GPUs may experience longer processing times due to CPU execution.

Output quality and reliability are now significantly improved and consistent.

Other Improvements

Temp Stretch normalization redesigned:

Implemented a full analytic solution for the normalization process.

Significantly improved robustness and stability.

Additional Notes

Update to the latest version is strongly recommended for all users.

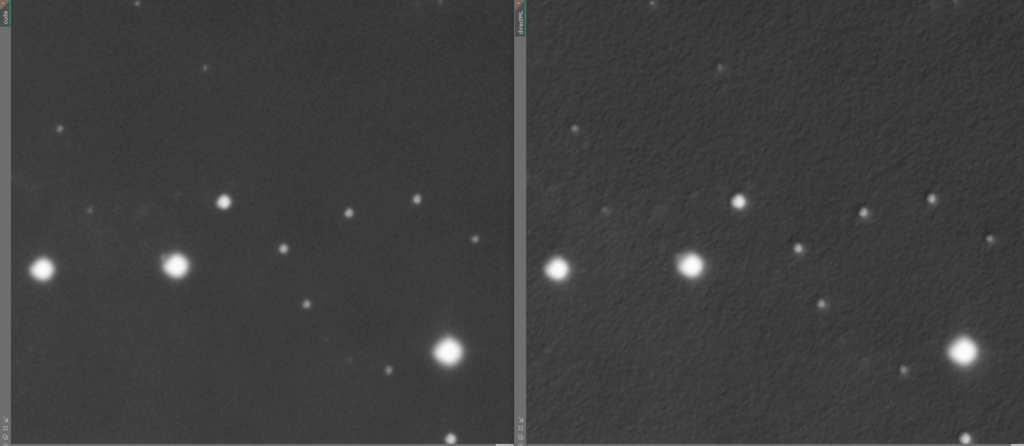

The example image included shows:

Left: correct inference using CUDA.

Right: incorrect inference produced by DirectML.

Prism Deep Photoshop Plugin

Bug Fixes

Fixed 16-bit image scaling: Photoshop uses a 0–32768 range for 16-bit data, not 0–65535. The incorrect divisor caused pixel values to arrive at the model at half intensity, resulting in lost nebulosity detail and artificially brightened stars.

Upgraded Core ML model from FP16 to FP32 precision (237 MB). FP16 introduced rounding errors in the NAFNet residual connection, degrading output quality compared to the Python reference.

Added input/output clamping to 0–1 on all inference paths to prevent out-of-range values.

Result

Plugin output is now identical to the Python reference